Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

The Optimus Gen 3 does not run on traditional robotics code. In typical robotics, engineers write thousands of lines of “if-then” statements: if there is an obstacle, then move left. Tesla has discarded this legacy approach in favor of Embodied AI.

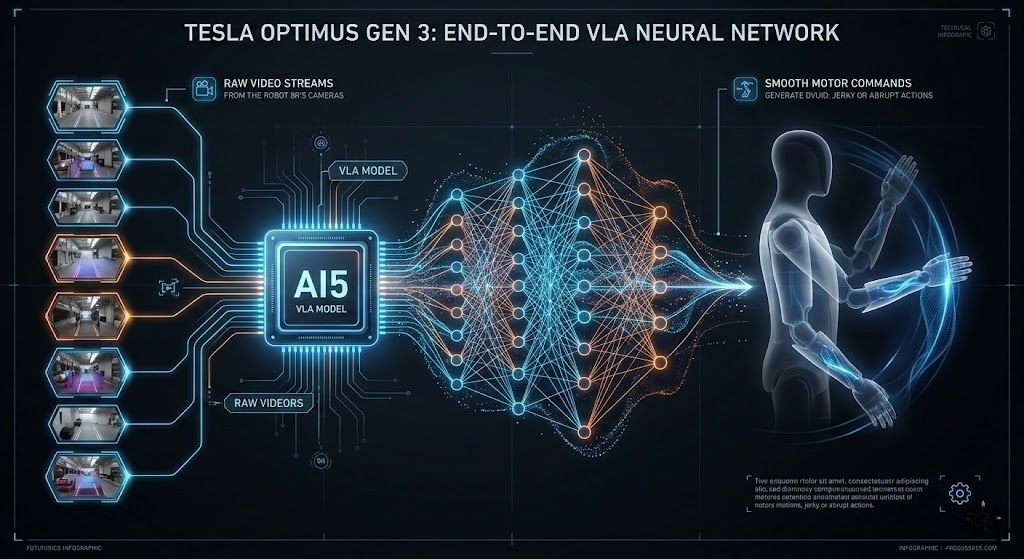

Tesla’s Optimus Gen 3 runs on the AI5 Hardware 5.0 chip. This custom silicon acts as a powerful brain for processing complex data. It uses an end-to-end neural network to function. This allows the robot to turn live video directly into movement without needing manual programming. This shared DNA between the car and the bot allows Tesla to apply billions of miles of driving data to the problem of walking and object manipulation.

Core Specifications Comparison of Optimus Gen 2/3 (2026)

| Key Parameter | Optimus Gen 2 (2024/25) | Optimus Gen 3 (2026) | Notes |

| Hand Degrees of Freedom (DoF) | 11 DoF | 22 DoF | Doubled dexterity for complex tool use |

| Total Body DoF | 28+ DoF | 50+ DoF | Enhanced torso and neck coordination |

| Battery Capacity | 2.3 kWh | 2.3 kWh (Optimized) | Same physical footprint; higher energy density |

| Continuous Runtime | ~4–5 Hours | 10–12 Hours | Driven by AI5 efficiency and actuator optimization |

| Walking Speed | 5 km/h | 8 km/h | More natural gait; capable of light jogging |

| Payload Capacity | 20 kg | 20 kg (Carrying) / 68 kg (Deadlift) | High-strength lightweight composite structure |

| Control Core | HW4 (Custom) | AI5 Dual-Redundant | 5×+ compute power for local vision processing |

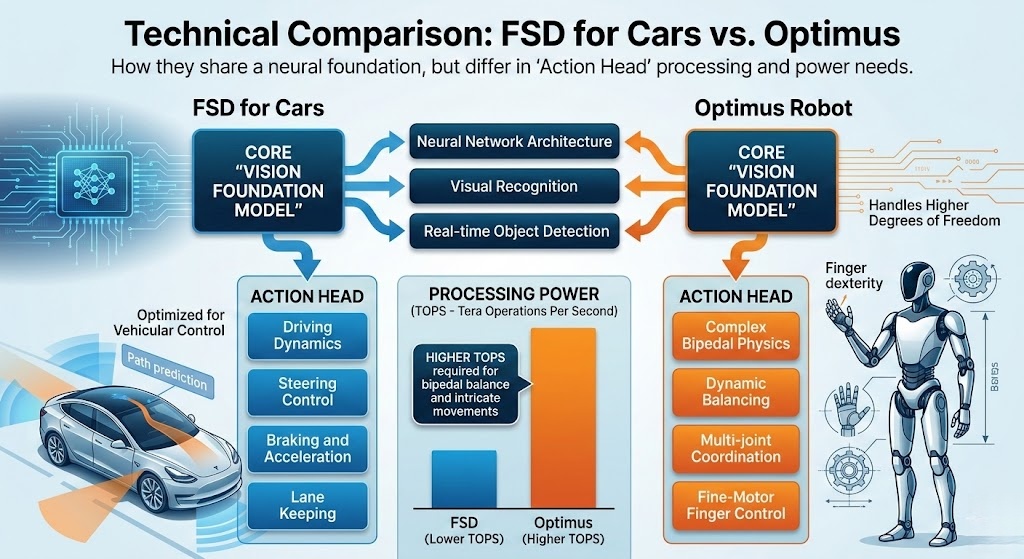

To grasp how the Optimus brain works, compare it to the system used in Tesla cars. Both share a similar base. However, the “Action Head” within the neural network is built quite differently for each task.

As shown in the, the core “Vision Foundation Model” is the same. However, the Optimus version requires higher TOPS (Tera Operations Per Second) to handle the complex physics of bipedal balance and fine-motor control in the fingers. Let’s check the computation table:

| Aspect | FSD for Cars | FSD for Optimus |

| AI Architecture | End-to-end neural networks processing video inputs for perception, planning, and control; trained on real-world data for autonomous navigation. | Similar end-to-end neural networks adapted from FSD, with emphasis on occupancy networks for 3D space understanding and task execution. |

| Hardware Compute | Tesla AI4 chip with dual redundant systems for failover safety, handling real-time inference. | Identical AI4 chip integrated into the robot’s chest for unified processing across Tesla’s ecosystem. |

| Sensors | Primarily vision-only with 8-9 cameras; some models include radar for depth; focuses on environmental mapping for driving. | Vision-based cameras plus force-torque sensors, IMUs, and tactile feedback for balance and interaction. |

| Actuators/Outputs | Simple controls: steering, acceleration, braking, and signaling; limited degrees of freedom (DOF). | Complex multi-joint system with 28-40 DOF overall, including hands with up to 22 DOF for dexterity. |

| Training Data | Billions of miles from Tesla fleet vehicles, focusing on driving scenarios and edge cases. | Combines FSD-derived data with teleoperation, simulation, and real-world imitation learning for tasks. |

| Environment & Use Cases | Structured roads with traffic rules; goal is safe, efficient point-to-point travel without physical contact. | Unstructured human spaces; emphasizes interaction, manipulation, and versatile tasks like chores or manufacturing. |

| Challenges | Handling rare driving events (e.g., weather, pedestrians); achieving reliability in rule-based settings. | Dexterity, balance recovery, and broad task generalization; safety in close human proximity. |

The “Brain” of the Optimus Gen 3 is the AI5 chip, which represents a massive leap over the previous Hardware 4.0 found in older Tesla vehicles.

TOPS stands for Tera Operations Per Second. The AI5 chip is capable of 2,500 trillion operations per second. In a humanoid bot, this raw power is used to run multiple neural networks simultaneously:

By moving to a 3nm (nanometer) process, Tesla has made the brain more energy-efficient. This is critical for the Optimus Gen 3 because every watt used for “thinking” is a watt taken away from “moving.” According to, the AI5 chip is roughly 10x more powerful than HW4 while consuming significantly less power per operation.

The brain does more than think; it talks. Optimus Gen 3 uses a 48-volt electrical setup. This allows for thinner wiring and faster data transmission between the brain and the actuators (the muscles). This is the same architecture used in the Cybertruck, proving that Tesla’s automotive innovations are the building blocks of their robotics.

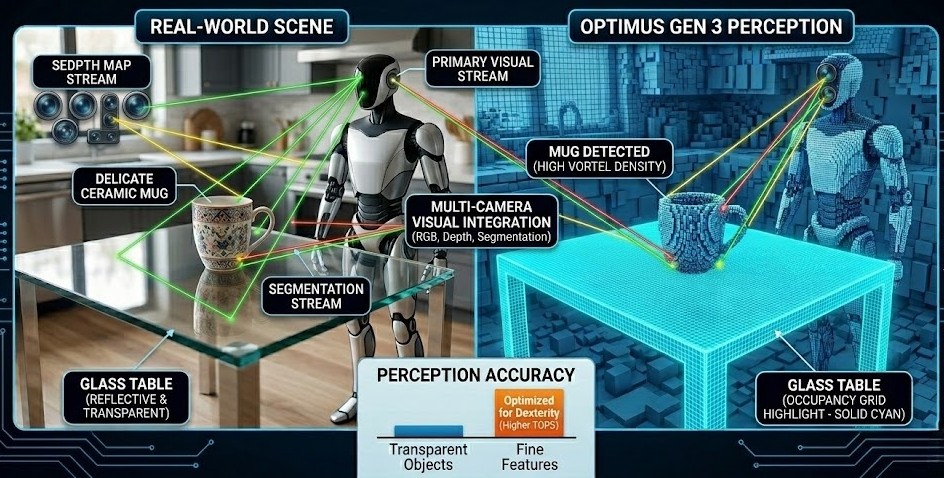

A brain is useless without senses. The Optimus Gen 3 perceives the world through a suite of sensors that feed directly into the FSD neural network.

Optimus stays away from the bulky LiDAR sensors you see on Boston Dynamics or Agility bots. It finds its way using Tesla Vision instead. This system builds its world view using eight sharp, high-res cameras.

New to the Gen 3 is the integration of tactile sensing into the FSD pipeline. The fingers on Optimus have a special “skin” that feels pressure. Once the robot touches something, the AI5 chip gets that data and tweaks the motor power instantly. This “Vision-Touch” cycle helps the bot grab a strawberry without squashing it. It is a trick the robot just could not do with cameras by themselves.

The AI5 chip runs the software that really makes this work. By 2026, Tesla moved to VLA models.

Older versions used separate code for seeing and planning. Now, the system is End-to-End. Raw video goes into the network, and motor commands come out. This makes the robot move in a smooth, natural way. It stops the jerky, robotic motions seen in the past.

The “Brain” now features a natural language interface powered by Grok AI. This serves as the translator between human intent and robotic action.

According to Elon Musk’s public statements, the integration of Grok allows Optimus to handle “unstructured requests,” making it the first truly general-purpose household assistant.

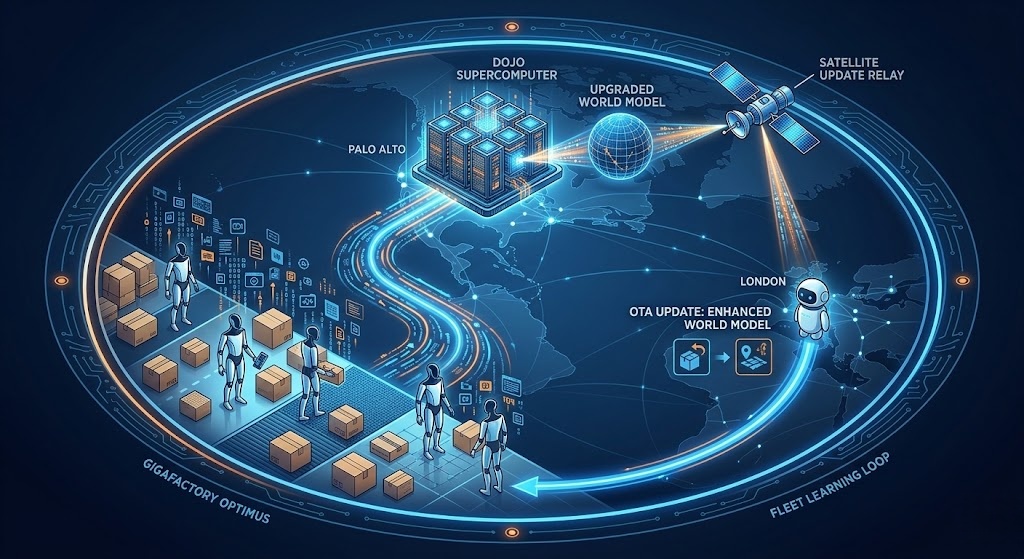

The FSD brain inside the robot is only half the story. The other half is the Dojo Supercomputer, Tesla’s massive training ground in Palo Alto.

Whenever a Tesla Bot at a Gigafactory encounters a new type of box or a new flooring surface, that data is sent to Dojo.

This means if a bot in Texas learns to walk on a slick floor, your robot in London gets that same skill by morning. This Fleet Learning system is a huge advantage. No other robotics firm can compete with this speed.

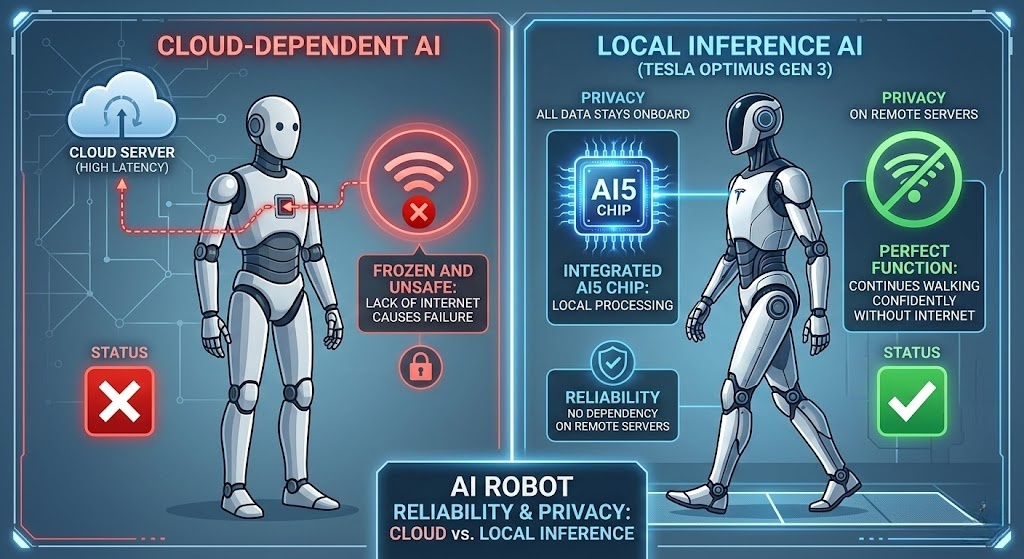

A major concern with AI-powered robots is privacy and safety. Tesla has built the FSD brain with several hard-coded safeguards.

Most AI tools (like ChatGPT) need a cloud connection to think. The Optimus Gen 3 uses Local Inference instead. This means the choice to stop walking or drop an object happens right on the robot’s AI5 chip. This keeps the robot safe and working even if your Wi-Fi cuts out.

The brain monitors the “current draw” of every motor. If the brain detects an unexpected resistance—such as a child’s hand in the way of a closing drawer—it halts all movement in less than 5 milliseconds. This is faster than human reaction time, making the bot physically safer than many traditional home appliances.

The humanoid robotics landscape has shifted dramatically by February 2026. Tesla’s Optimus Gen 3 is currently winning the race for mass production and AI integration. Meanwhile, the Figure 03 model is setting new standards for domestic use, and Agility’s Digit continues to dominate the heavy lifting in warehouse logistics.

Here is how the three top contenders compare:

| Feature | Tesla Optimus Gen 3 | Figure 03 | Agility Robotics Digit (V4) |

| Primary Target | Manufacturing & Future Home | Home Assistant & Service | Logistics & Warehousing |

| Dexterity | 22 DoF Hands | Proprietary Helix Hands | Purpose-built Grippers |

| Tactile Sensitivity | High (Finger-tip sensors) | Ultra-High (3g detection) | Standard (Industrial grip) |

| AI Architecture | FSD-based (End-to-End) | Helix VLA (Vision-Lang-Action) | ARC (Agility Controls) |

| Charging Method | Back-Dock / Magnetic | Inductive (Feet/Wireless) | Battery Swapping / Docking |

| Runtime | 10–12 Hours (Optimized) | ~5 Hours | 8–12 Hours (Shift-ready) |

| Safety Design | Rigid (Safe-state protocols) | Soft-goods & Foam cladding | Industrial (Safety rated) |

| Estimated Price | ~$20,000 – $30,000 | ~$20,000 – $50,000 | ~$250,000 (or RaaS) |

A: They share the same Vision Foundation Model and hardware (AI5). However, the “Action Head”—the part of the code that controls the motors—is specialized for humanoid joints rather than steering and braking.

A: Yes. Through In-Context Learning, the robot can “watch” you perform a task via its cameras and use its neural network to mimic the movement, a process known as “Behavioral Cloning.”

A: Tesla utilizes Edge Computing. Visual data used for navigation is processed locally on the AI5 chip. Unless the user explicitly opts-in for data sharing to help train the global model, the images never leave the robot’s internal storage.

A: The Optimus features a Dual-Redundant Architecture. There are two AI5 chips running in parallel. If one chip fails or experiences an error, the second chip takes over to safely bring the robot to a “Safe State”.

We are no longer in the era of “dumb” robots that can only perform one task. Thanks to the AI5 chip and the power of Dojo-trained neural networks, we have entered the era of General Purpose Robotics. The Tesla Optimus Gen 3 is more than just a robot; it is the physical manifestation of a decade of AI development.

Which part of Tesla’s bot tech actually blows your mind? Is it that massive AI5 processing power, or do you think the “Pure Vision” setup is more impressive? Drop a comment below and let us know what you think.